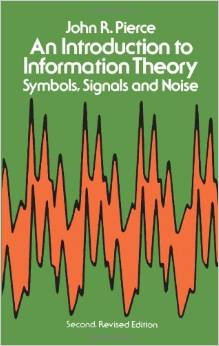

I usually wimp out when it comes to learning hard mathematical stuff like what is required to have a working understanding of Information Theory. In this case, though, I am glad I let Christian convince me to give it a shot because it appears to be fundamental to things like how the brain works, intelligent design, statistical inference, cryptography, quantum computing and a ton of other stuff related to my work and/or are my avocational interests. I looked around for a decent introductory book that did not get so bogged down in the math that the big picture did not emerge. John R. Pierce’s book An Introduction to Information Theory: Symbols, Signals and Noise seemed to be an almost universal choice to meet this criteria.

I usually wimp out when it comes to learning hard mathematical stuff like what is required to have a working understanding of Information Theory. In this case, though, I am glad I let Christian convince me to give it a shot because it appears to be fundamental to things like how the brain works, intelligent design, statistical inference, cryptography, quantum computing and a ton of other stuff related to my work and/or are my avocational interests. I looked around for a decent introductory book that did not get so bogged down in the math that the big picture did not emerge. John R. Pierce’s book An Introduction to Information Theory: Symbols, Signals and Noise seemed to be an almost universal choice to meet this criteria.

I am half way through the first chapter of the book. It has become abundantly clear that a full understanding of Information Theory is not really possible without an engagement with the math at a deep level. Nevertheless, a review at Amazon made the following observation about the book that makes me think I am on the right path. I might need to read one or more additional books to arrive at the working understanding I want, but this will definitely get me started at a level that does not discourage me from taking the next steps. Here is an excerpt from the review:

The book is geared towards non-mathematicians, but it is not just a tour. Pierce tackles the main ideas just not all the techniques and special cases. Perfect for: anyone in science, linguistics, or engineering.

Another thing that is abundantly clear is that Christian, in his current position with his current major professor and research sponsor, has an exceptional opportunity to get a strong grounding in the area of Information Theory and that such a grounding will serve him very well whether in whatever technical research pursuit he chooses when he finishes this degree. His first research project is the solution of a difficult problem that engages specifically with the material about which I am reading, but with mathematical rigor beyond the scope of the book.

If the material is not too tedious for a general blog like this, I plan to write about it more because it is so interesting. I am early in the book and engaged with topic of entropy as it is used in the field of Information Theory. Entropy has a very specific definition in this context and is different from entropy as that word is used in thermodynamics or statistical mechanics. The bigger deal for me is that I can see it has important ramifications for even the work I do in my day job.

Betty Blonde #183 – 03/30/2009

Click here or on the image to see full size strip.

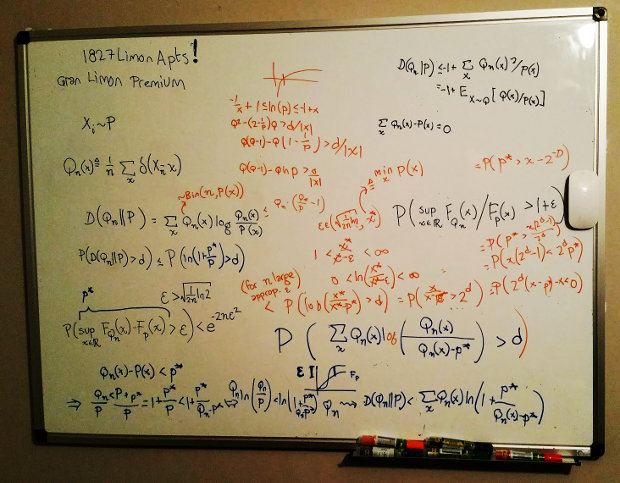

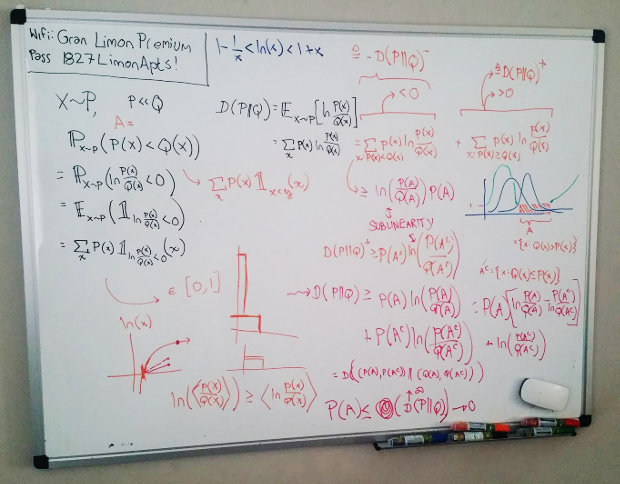

We saw the April 30, 2017 whiteboard (below) when we first arrived at his apartment on our move to Centralia, WA. Christian hopes he is on the verge of his first (semi) important, first author publication in a major academic journal. The work for that paper is pretty much done. He has to refine the verbiage and get past the whole scholarly review thing which is never a sure thing, but he has something that is pretty solid. The two whiteboard’s below are two consecutive days of work. I thought he spent a lot of time at the computer, but that is not really how he works. He looks at the whiteboard and then he just thinks. His job, his professor tells him, is to think. He has a second paper in mind. He hopes it will be better than the first. The first is solid–something that needed to be worked out. The second, however, is something that might be a true innovation. Something new, not yet considered, that contributes to the field. We hope so, but it is hard to know. Even after a paper like that is published, its importance might not even be know in the lifetime of the author. Truly interesting stuff. AND the whiteboards look really cool.

We saw the April 30, 2017 whiteboard (below) when we first arrived at his apartment on our move to Centralia, WA. Christian hopes he is on the verge of his first (semi) important, first author publication in a major academic journal. The work for that paper is pretty much done. He has to refine the verbiage and get past the whole scholarly review thing which is never a sure thing, but he has something that is pretty solid. The two whiteboard’s below are two consecutive days of work. I thought he spent a lot of time at the computer, but that is not really how he works. He looks at the whiteboard and then he just thinks. His job, his professor tells him, is to think. He has a second paper in mind. He hopes it will be better than the first. The first is solid–something that needed to be worked out. The second, however, is something that might be a true innovation. Something new, not yet considered, that contributes to the field. We hope so, but it is hard to know. Even after a paper like that is published, its importance might not even be know in the lifetime of the author. Truly interesting stuff. AND the whiteboards look really cool.

If I have the time right, Christian, at this very moment, is presenting his Information Theory research at MIT Lincoln Laboratory. This is the first time he has done this kind of formal presentation (with a tie and all that). It is the culmination of a full year of research in a brand new (to Christian) area of Mathematics and Electrical Engineering. Lorena and I are on pins and needles waiting to hear how it went. If all goes well, this should eventually turn into a refereed conference paper and, with expanded research and content, possibly even a refereed journal article.

If I have the time right, Christian, at this very moment, is presenting his Information Theory research at MIT Lincoln Laboratory. This is the first time he has done this kind of formal presentation (with a tie and all that). It is the culmination of a full year of research in a brand new (to Christian) area of Mathematics and Electrical Engineering. Lorena and I are on pins and needles waiting to hear how it went. If all goes well, this should eventually turn into a refereed conference paper and, with expanded research and content, possibly even a refereed journal article.

I usually wimp out when it comes to learning

I usually wimp out when it comes to learning