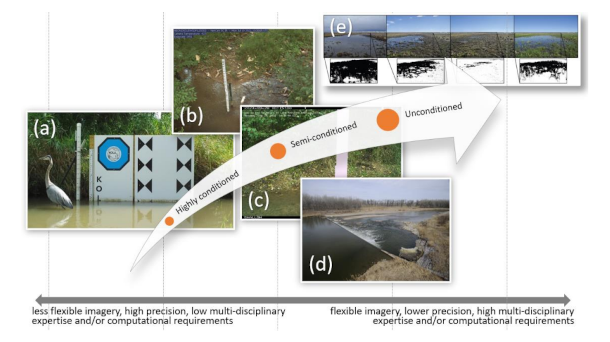

Troy flew to Tuscaloosa, Alabama yesterday to attend a water conference. He will give a presentation on the work we do at the GRIME Lab. The main focus of the lab is to drive complexity of the use of ground-based imagery to answer hydrological questions. The above, fairly simple, graphic describes it well. The image on the left is pretty hard to set up and maintain, but reduce the complexity of the image processing task because of the vision targets in the image. The image on the right is way easier to set up because nothing has to be installed or maintained in front of the camera, but the processing is way harder because there are no physical references for real-world unit calibration or camera motion in the scene. We are going to be able to watch Troy’s presentation online this afternoon.

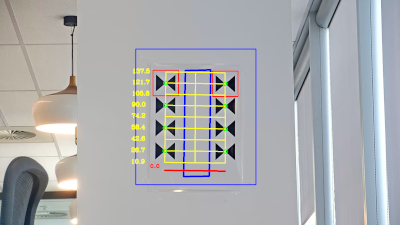

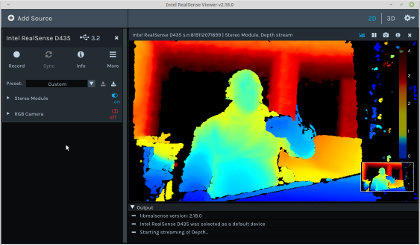

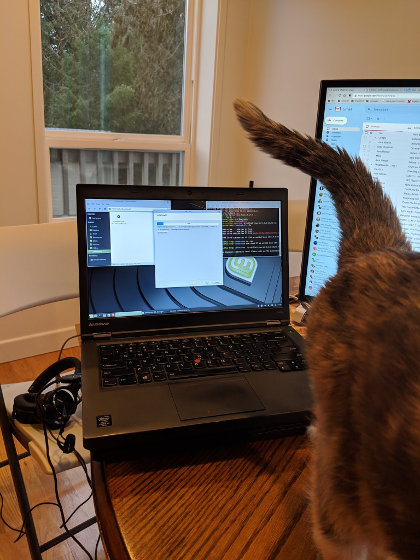

I started with a new project this week. I am helping a buddy on a three dimensional tracking, measuring, guidance problem. We each bought a RealSense 2d/3d camera. The bought a refurbished ThinkPad laptop with a USB 3.0 port with this project in mind. The USB 3.0 port provides for faster throughput of image data. It was really easy to get the thing up an running. It will be quite a bit more work to control the camera programmatically.

I started with a new project this week. I am helping a buddy on a three dimensional tracking, measuring, guidance problem. We each bought a RealSense 2d/3d camera. The bought a refurbished ThinkPad laptop with a USB 3.0 port with this project in mind. The USB 3.0 port provides for faster throughput of image data. It was really easy to get the thing up an running. It will be quite a bit more work to control the camera programmatically. The main office area of the office in the basement has been being painted over the last week or so. I have been relegated to the dining room table. When I moved back down and was shuffling things around, I found the the lights Gene made for the bean sorting project. I am going to get them sent off to him so he can start sending me some images. We might get lucky and have our original setup work, but I think that is pretty unrealistic. We will definitely have to make modifications quite a few times until we get the whole lighting designed tweaked to the point it works with the falling beans. That is not to mention the fact that we have not even started at all on the mirror setup to see both sides of each bean.

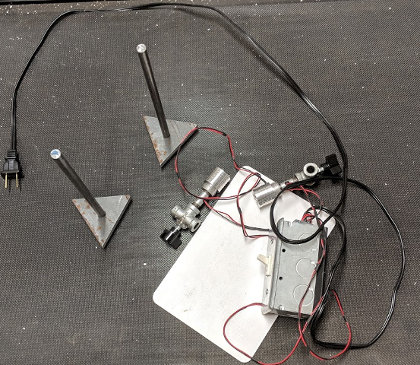

The main office area of the office in the basement has been being painted over the last week or so. I have been relegated to the dining room table. When I moved back down and was shuffling things around, I found the the lights Gene made for the bean sorting project. I am going to get them sent off to him so he can start sending me some images. We might get lucky and have our original setup work, but I think that is pretty unrealistic. We will definitely have to make modifications quite a few times until we get the whole lighting designed tweaked to the point it works with the falling beans. That is not to mention the fact that we have not even started at all on the mirror setup to see both sides of each bean. I was able to advance on the bean sorter program sufficiently to send the computer and camera off to Gene so he can start working on the lighting. I am amazed that we are continue to move forward. This project is not moving along at lightening speed, but with Gene’s efforts and great mechanical skill and knowledge we make steady progress. Hopefully, he will be able to take some images of dropping beans and it will allow us to see the spread of the beans and whether I believe I can see them well enough in the images to do the calculations needed to sort them properly. The next big challenge is two-fold: 1) getting the beans to fall as straight as possible and 2) getting the mirror set up. After that, we will attack the lighting.

I was able to advance on the bean sorter program sufficiently to send the computer and camera off to Gene so he can start working on the lighting. I am amazed that we are continue to move forward. This project is not moving along at lightening speed, but with Gene’s efforts and great mechanical skill and knowledge we make steady progress. Hopefully, he will be able to take some images of dropping beans and it will allow us to see the spread of the beans and whether I believe I can see them well enough in the images to do the calculations needed to sort them properly. The next big challenge is two-fold: 1) getting the beans to fall as straight as possible and 2) getting the mirror set up. After that, we will attack the lighting. The new $243 plus tax computer arrived today and I have to admit that it is great. I loaded Linux Mint, OpenCV, Boost, Qt Creator (only for the IDE), the Wt libraries, downloaded the bean sort code from Subversion and had it compiling in three hours or so and that includes building OpenCV, Boost, and the Wt libraries from source.

The new $243 plus tax computer arrived today and I have to admit that it is great. I loaded Linux Mint, OpenCV, Boost, Qt Creator (only for the IDE), the Wt libraries, downloaded the bean sort code from Subversion and had it compiling in three hours or so and that includes building OpenCV, Boost, and the Wt libraries from source. A couple of days ago, I broke down and bought a refurbished laptop for the bean sorting project. It surely seems to be a smoking good deal at $243.09 plus tax. I have been working to get the thing running on a Raspberry Pi and that works fine, but is way more hassle than we need during the development stage. It was necessary to hook up a keyboard, a mouse, a monitor, and the camera which, during the development stage, needs to be moved around a lot. It is just easier to do it on a laptop.

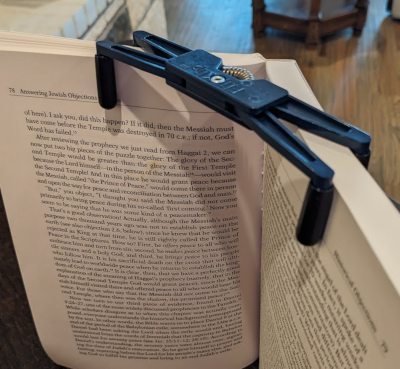

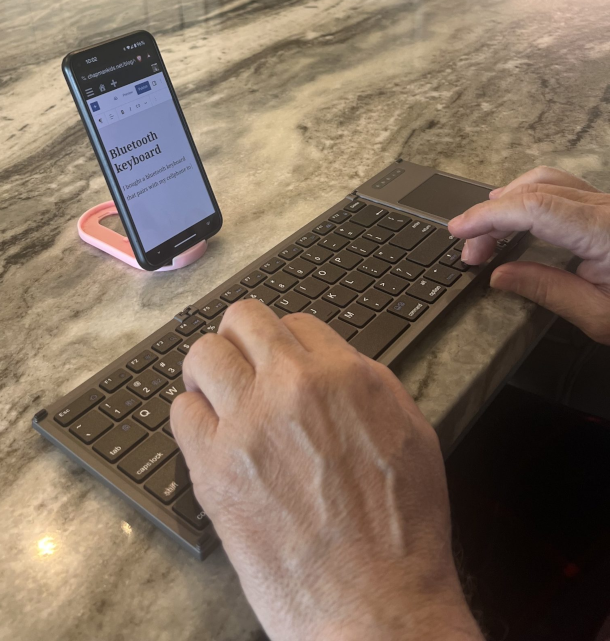

A couple of days ago, I broke down and bought a refurbished laptop for the bean sorting project. It surely seems to be a smoking good deal at $243.09 plus tax. I have been working to get the thing running on a Raspberry Pi and that works fine, but is way more hassle than we need during the development stage. It was necessary to hook up a keyboard, a mouse, a monitor, and the camera which, during the development stage, needs to be moved around a lot. It is just easier to do it on a laptop. I got a book for Christian at Christmas time. While he was with us, I read the preface. I liked it so much, I bought for Kindle on my phone. The premise is that the business model represented by Google will be supplanted by a business model with block-chain money that is fundamentally more secure and monetarily stable. I love the way the author, George Gilder, writes and I believe he is write on this. This is not the first time he rightly called a sea change like this would represent.

I got a book for Christian at Christmas time. While he was with us, I read the preface. I liked it so much, I bought for Kindle on my phone. The premise is that the business model represented by Google will be supplanted by a business model with block-chain money that is fundamentally more secure and monetarily stable. I love the way the author, George Gilder, writes and I believe he is write on this. This is not the first time he rightly called a sea change like this would represent. I lost my wallet on my last trip to Boston and suffered through the pain of cancelling all my credit cards, then ordering new ones along with a replacement drivers license and a replacement Nexus card. When Christian lost his wallet, he did something about it that he recommended to Lorena and I. He bought a two

I lost my wallet on my last trip to Boston and suffered through the pain of cancelling all my credit cards, then ordering new ones along with a replacement drivers license and a replacement Nexus card. When Christian lost his wallet, he did something about it that he recommended to Lorena and I. He bought a two  Kelly sent this photo of her and Christian yesterday. They had a pretty good time in San Francisco over the New Year. More than anything, I think they are pretty tired. Lorena and I had a quiet day at home because I was still suffering from the residual of a cold. We DID have a big steak with a grilled onion, and a baked potato. Also, I spent most of the day working on the bean sorting project. Gene has made really big progress, so I need to get an application going so that he can have a way to see what the camera captures as the beans drop. That will allow him to develop the lighting. It is a little more complicated than just creating a capture application. We really need to find a way for me to upgrade is program (running on a Raspberry Pi) over the internet. I have done this before, so it will be great to get a little more experience at doing this sort of thing.

Kelly sent this photo of her and Christian yesterday. They had a pretty good time in San Francisco over the New Year. More than anything, I think they are pretty tired. Lorena and I had a quiet day at home because I was still suffering from the residual of a cold. We DID have a big steak with a grilled onion, and a baked potato. Also, I spent most of the day working on the bean sorting project. Gene has made really big progress, so I need to get an application going so that he can have a way to see what the camera captures as the beans drop. That will allow him to develop the lighting. It is a little more complicated than just creating a capture application. We really need to find a way for me to upgrade is program (running on a Raspberry Pi) over the internet. I have done this before, so it will be great to get a little more experience at doing this sort of thing.